Is Your CTO Using a Vector Database?

Jul 25, 2023

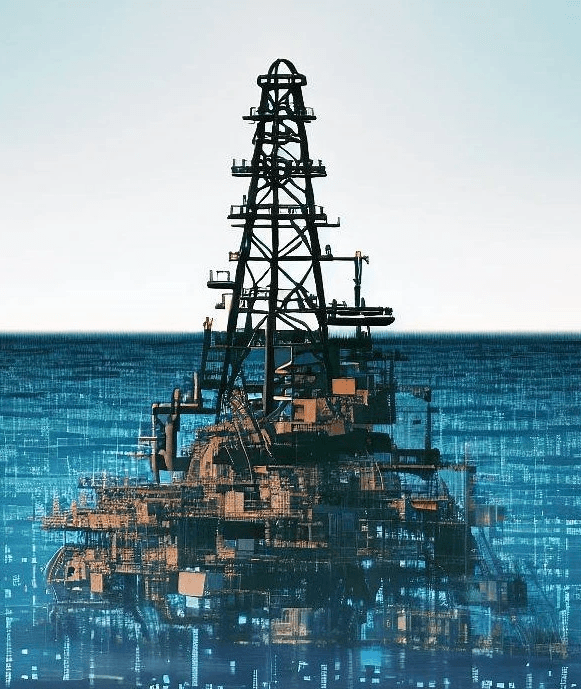

You have likely heard the phrase “Data is the new oil” or “Data is the new gold.” Essentially, your company is sitting on an immense amount of valuable raw material. Vector Databases and GenAI are the rig and refinery to untap its value. This unstructured data comes in many forms such as text in documents that can be the basis of a question/answering system for internal or external customer service, images that can be leveraged to best match a customers interests or purchase preferences, and video to estimate distance to ensure a fully automated car drives safely.

What Are Vector Databases and Embeddings?

Vector databases (or “Vector Stores”) and embeddings allow for large unstructured data sets with many attributes (aka “high dimensional data”) to be stored, queried, and manipulated efficiently. Each object (or data point) is given a numerical representation, like a point on a map (a “vector embedding”). Similar objects are located close to each other, and unrelated objects are further apart. For example, a maroon shirt will be much closer to a red shirt than a notebook (a random object without relation). Vector stores capture the distance between objects on the map mathematically, allowing a computer to process contextual relationships.

What Does This Mean?

Let’s take a traditional library where you can search for books by title, author, or topic. Now imagine a library where you can find books that ‘feel’ similar, not just by apparent categories but also by nuanced themes, settings, writing style, or even the emotions they evoke. A vector database is like that. Each book in our imaginary library represents a piece of data or object. The ‘feel’ of the book — its themes, style, and emotions — could be thought of as a set of characteristics or features. These features would be represented as vector embeddings or points on the map.Vector databases not only store data but also keep track of other attributes like sentiment, classification, and sentence similarity. Now you can quickly find other pieces of data with a similar ‘sentiment’ or structure (plagiarism detection) that is something traditional structured databases that are built with row and column stores find virtually impossible. It’s like a super-efficient search tool that goes beyond keyword matching to understand the ‘essence’ of what you’re looking for.

Why Does this Matter?

Many executives are planning and starting to execute their AI strategies. Some companies even have a Chief AI Officer. At Yeager.ai, we’ve engaged many companies across a broad spectrum of verticals (Pharma, Aviation, Financial Services, Media, Research, and more).Conversations boil down to two key objectives:

Leverage existing knowledge base to improve existing operations

Ingest many sources and formats of data to produce company specific work product

A major common problem in both of these objectives is unstructured data. Unstructured data is usually text-heavy, anything that doesn’t fit neatly into your elegant Excel model (emails, customer reviews, product specs, call transcripts, website copy, internal memos, etc.)

Enter Vector stores and LLMs (“Large Language Model” aka “Generative AI Models”.) To quote ChatGPT, “a match made in computational heaven.”

A layer of LLMs can transform unstructured data (e.g., an email) into usable data points in a vector database (i.e., “vector embeddings”). This is the major breakthrough that most people aren’t aware of. Vector embeddings are like a secret language for computers. They help computers understand the connections between words or things. For example, knowing that cats and dogs are both types of pets and that red and maroon are both colors that are similar to each other.

With vector embeddings in place, LLMs can now use vector databases to generate intelligent output. For example, prior to prompting an LLM, you can query the vector database to find similar past instances and use these to generate relevant input into the LLM. This allows LLMs to bypass their context size limitations and work with an effectively unlimited amount of data. LLMs rely on immense amounts of previously encountered data (“memory” in GenAI terms). Vector stores allow LLMs to efficiently store and retrieve memories, streamlining an otherwise computationally (time and money) intensive process.

Everyone wants to “train a model on their company’s knowledge base .” But what does this mean? GenAI models use vector databases ingested with existing data (i.e., company knowledge base) to identify patterns and relationships, and use this to generate new, relevant content. This creates a learning flywheel where GenAI models can use its newly generated content in future tasks in an iterative process where the model is essentially self-teaching and self-improving.

Potential Real-World Use Cases

Talent Acquisition: HR departments often have to sift through a vast amount of unstructured data such as resumes, cover letters, and interview transcripts and notes. By leveraging GenAI and Vector stores, businesses can streamline the hiring process. For example, when a new job posting is created, GenAI can compare the job requirements with received resumes in the database to shortlist suitable candidates and generate an outreach (email or LinkedIn message).

Content Curation: Media companies with vast amounts of content (articles, videos, podcasts) could use GenAI to vectorize this content based on various factors like genre, length, and popularity. When users interact with the platform, their behavior can be vectorized and compared with content vectors to curate a personalized list of content that aligns with their preferences. Then GenAI can draft highly personalized content for specific users.

Account Reconciliation: Auditors compare ledger balances with bank statements, invoices with receipts, expenses with payments, etc. These records often come from different sources and are unstructured and voluminous. GenAI can perform similarity searches in the vector database to identify data points that should theoretically match (e.g., an invoice and a corresponding payment). It can flag any inconsistencies for further review and suggest additional information. Moreover, the system can learn from the corrections made during these reviews and add the learnings to its memory.So, What is Your CTO’s

Unstructured Data Strategy?

The value of vector databases and embeddings is clear, and there are clear business use cases. As companies move to adopt AI, a general understanding of Vector Databases embeddings is critical to unlocking the true value of unstructured data. At Yeager.ai, vector databases and embeddings are a fundamental layer in our 🧬🌍 GenWorlds framework, allowing our customers to build bespoke collaborating AI Agents trained on their company’s knowledge base. We chose Qdrant for our Vector DB because of its versatility (run on cloud or on-prem) and because of its efficiency (lite on dependencies and fast). We encourage businesses to get educated on vector databases to stay ahead of the GenAI adoption game.

About Qdrant

Qdrant is a high-performance, scalable, secure, open-source, similarity (vector) search engine and database, essential for building the next generation of AI/ML applications. Qdrant was founded out of Berlin in 2021 by Andre Zayarni, Andrey Vasnetsov, is back with over $9.7 million seed funding from Unusual Ventures, 42cap, IBB Ventures and a handful of angel backers, including Cloudera co-founder Amr Awadallah. Qdrant is a partner with AWS, Cohere, DocArray, LangChain, LlamaIndex, and OpenAI. To see how Qdrant can help you visit http://qdrant.tech

About Yeager

At Yeager.ai, we are on a mission to enhance the quality of life through the power of Generative AI. Our goal is to eliminate the burdensome aspects of work by making GenAI reliable and easily accessible. By doing so, we foster a conducive environment for learning, innovation, and decision-making, propelling technological advancement.

Learn More About 🧬🌍GenWorlds

Demo: https://youtu.be/INsNTN4S680

GitHub: https://github.com/yeagerai/genworlds

Docs: https://genworlds.com/docs/intro

Discord: https://discord.gg/wKds24jdAX